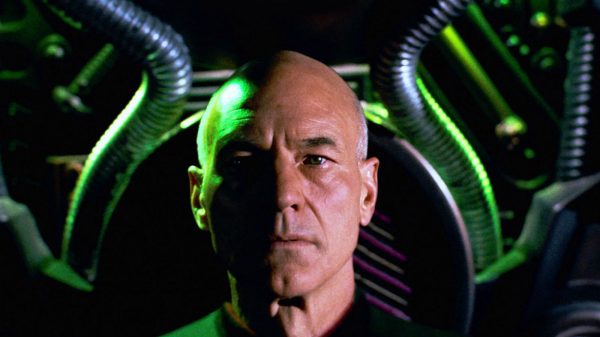

Surely, fans of Star Trek: The Next Generation will remember LeVar Burton’s character, Geordi La Forge, one of the show’s most influential and inspiring characters. A person born with blindness, by today’s standards, would’ve been excluded from so many opportunities due to this crippling disability. In accordance with the humanist themes of Star Trek, it’s more than fitting to imagine an example of a disability today on Earth being surpassed in the future. In fact, it really does stand as a testament of human courage and ingenuity to see someone as brave and capable as Geordi rise to the occasion and overcome adversity, and due in no small part to his VISOR (Visual Instrument and Sensory Organ Replacement).

The VISOR not only allows Geordi to see things when his eyes are unable to, but they can even allow him to analyze his surroundings in wavelengths that are imperceptible to the human eye.

In the season one episode of TNG, “Heart of Glory,” Geordi uplinked his VISOR to a transmitter to allow the bridge crew to see what Geordi saw on an away mission. The images were a spectacular show of bright colors and vibrant hues representative of infrared and ultraviolet sources, such that Captain Picard regarded the display with a single word: “Extraordinary!” It was a scene that roused more envy, awe, and appreciation rather than pity among Geordi’s friends as they saw his blindness as more of a gift than an actual disability, as evident in Picard’s reflection: “Now I’m beginning to understand him [Geordi].” It was a demonstration in Star Trek that proves the best way to learn from others is by putting oneself in another’s shoes to see what he or she sees.

How is Geordi’s VISOR related to Google Goggles and Project Glass and how is it so remarkable? Imagine more than 20 years ago, you saw that episode when it first aired and someone next to you said: “I bet Google is going to come out with a device or an app that allows computers to compile and visualize data from their surroundings and interpret reality in the same way our brains make sense of everything around us. Amazing, huh?” Your first reaction might’ve been: “What’s a Google?” All jokes aside, one might have a negative reaction in the 60s if one’s friend said Star Trek communicators will turn out to be cell phones more than thirty years later, and that the technology would become so advanced that everyone would have them. Why should we expect any less when it comes to Google Goggles? Why shouldn’t we expect this new invention to become the prerequisite design for Geordi’s VISOR?

What are Google Goggles? Google Goggles is the next generation of computer technology. Just as Smart Phones and iOS devices were the next generation advances that combined cell phones and computers, Google Goggles are the next phase in computer technology that allows the user to compile information from a single image or collection of words and phrases. The process is technically a lot more complex that I made it sound. So the best way to explain this is with an analogy.

When you look at an object, your brain analyzes and synthesizes an accurate representation of that object in your mind; it is a way of bringing reality completely within the scope and grasp of your mind. You can look at something as seamless and simple as an apple and understand the concepts of color, taste, texture, and other things just from looking at it and experiencing it (i.e. seeing the apple and relating sight with your other senses). Your brain will compile information from that experience, analyze it, integrate its conceptual domains in your consciousness, and react from it; this information is both useful and necessary for your survival as an autonomous, free-willed individual. You may not notice it, but there is a whole complicated, multifaceted process involved in looking at an image and extracting useful data from it, not unlike the way a computer would analyze a data set to return a logical, mathematical conclusion. Just as a calculator uses a simple algorithm to determine that 1 + 1 is equal to 2, your brain uses a highly advanced algorithm to understand and form concepts from reality. While nature has had millions of years of trial and error to evolve a brain as complex as ours from the bottom-up, it is tremendously more difficult for scientists to work top-down to recreate a brain-like computer that can take a picture of an apple and analyze that image into bits of information and form concepts of taste, color, and texture from the experience. It would be like asking your inanimate digital camera trying to grasp the concept of “food” just from the image of an apple. All a camera could do is take a picture, render that image into bits of digital information, and re-render the info into a visual representation of reality; it can’t analyze or synthesize it beyond that. It can’t tell you how an apple is conceptually related to a human being any more than it can visually take a snapshot of a human being eating an apple; the software of a camera is direct and limited while the software of a human brain is so much more complex.

This is why the Google Goggles is so extraordinary! It is a computer feature that will analyze images taken by a camera phone for key words and phrases. We already have Smart Phone technology that can analyze a barcodes, conceptualize the codes on a very minimalistic level (by minimalistic, I mean preprogrammed), and finally return a webpage linked to the image. What is so remarkable about Google Goggles is that it can do more than just analyze a barcode: it can conceptualize words and search for key phrases on the internet to find even more relevant information. When Smart Phones was limited to a preprogramed software to analyze barcodes, Google Goggles is the next step in computer evolution that can analyze whole words and sentences from a picture. It may not grasp the full meaning of a sentence, but it can at least identify the individual words of a sentence. With object identity (such as distinguishing an apple from a human), the app would have some difficulty, but who’s to say that the current program can’t be improved upon to make that an eventuality?

When before taking a picture with a camera phone revealed nothing more than an image, now Google Goggles can allow one to take a picture of an object to recall more detailed information beyond what is revealed in just the image alone. Of course, it isn’t perfect: there are certain things it can’t do and object recognition is nowhere near as sophisticated as word/phrase identification, but the software can still do amazing things.

The video below explains more about Google Goggles.

Watch the video Google released to hype the development of Project Glass below.

Obviously, if we can design a computer program that analyzes its surroundings for conceptual feedback, then imagine the possibilities for artificial intelligence in the future. This is very much a Science Fact, no doubt, but why the reference to Geordi’s VISOR? How are the two related? Well, the VISOR works in very much the same way: the VISOR picks up visual information from the environment in more wavelengths than the human eye can detect and interpret those signals into a digital format that the brain can understand. In a sense, the VISOR is a piece of technology that is able to conceptualize its environment in a form more accessible to the human brain.

The main differences are (1) Google Goggles are more sophisticated in the sense that the program does the “thinking” for you and (2) the VISOR can pick up wavelengths outside of the visual region of the electromagnetic spectrum. I can see huge potential for Google Goggles as a visual aid for the blind sometime in the future. If a neuroscientist can bypass the eyes and apply visual-sensory input directly to the brain’s analytical regions (also known as association areas), then there is no reason not to expect microcomputers in the future that could potentially alleviate blindness in those that cannot see as well as offer Smart Phone/iPhone users a very interesting and useful app.

Tony

April 22, 2012 at 10:02 pm

Truly fascinating. When I first saw these things I thought they were just a fad but then I began to think about it, if we could bypass deafness we can bypass blindness. I sincerely hope that this is the path to doing so.

My God, we’ll be in Star Trek in no time.

Dave

April 23, 2012 at 3:48 am

Keep on reading articles about these glasses, but I’m beginning to think its a big marketing scam to raise there stock prices and here’s why. Google is supposed to have these available to buy the end of this year beginning of next but there has been zero unofficial images of them or even parts of them. There seems to be no real technical specifications I can find for them and the only people that have talked about using them are marketing people from google. I really hope I’m wrong but I can see this being one of those gadgets that never really turns up

Jenny

April 24, 2012 at 3:17 am

I believe it will come true for Science and technology are developed now.